馨团,703804龙舟,索易商捷

前言

在默认情况下,python的新类和旧类的实例都有一个字典来存储属性值。这对于那些没有实例属性的对象来说太浪费空间了,当需要创建大量实例的时候,这个问题变得尤为突出。

因此这种默认的做法可以通过在新式类中定义了一个__slots__属性从而得到了解决。__slots__声明中包含若干实例变量,并为每个实例预留恰好足够的空间来保存每个变量,因此没有为每个实例都创建一个字典,从而节省空间。

本文主要介绍了关于python使用__slots__让你的代码更加节省内存的相关内容,分享出来供大家参考学习,下面话不多说了,来一起看看详细的介绍吧

现在来说说python中dict为什么比list浪费内存?

和list相比,dict 查找和插入的速度极快,不会随着key的增加而增加;dict需要占用大量的内存,内存浪费多。

而list查找和插入的时间随着元素的增加而增加;占用空间小,浪费的内存很少。

python解释器是cpython,这两个数据结构应该对应c的哈希表和数组。因为哈希表需要额外内存记录映射关系,而数组只需要通过索引就能计算出下一个节点的位置,所以哈希表占用的内存比数组大,也就是dict比list占用的内存更大。

如果想更加详细了解,可以查看c的源代码。python官方链接:

如下代码是我从python官方截取的代码片段:

list 源码:

typedef struct {

pyobject_var_head

/* vector of pointers to list elements. list[0] is ob_item[0], etc. */

pyobject **ob_item;

/* ob_item contains space for 'allocated' elements. the number

* currently in use is ob_size.

* invariants:

* 0 <= ob_size <= allocated

* len(list) == ob_size

* ob_item == null implies ob_size == allocated == 0

* list.sort() temporarily sets allocated to -1 to detect mutations.

*

* items must normally not be null, except during construction when

* the list is not yet visible outside the function that builds it.

*/

py_ssize_t allocated;

} pylistobject;

dict源码:

/* pydict_minsize is the minimum size of a dictionary. this many slots are

* allocated directly in the dict object (in the ma_smalltable member).

* it must be a power of 2, and at least 4. 8 allows dicts with no more

* than 5 active entries to live in ma_smalltable (and so avoid an

* additional malloc); instrumentation suggested this suffices for the

* majority of dicts (consisting mostly of usually-small instance dicts and

* usually-small dicts created to pass keyword arguments).

*/

#define pydict_minsize 8

typedef struct {

/* cached hash code of me_key. note that hash codes are c longs.

* we have to use py_ssize_t instead because dict_popitem() abuses

* me_hash to hold a search finger.

*/

py_ssize_t me_hash;

pyobject *me_key;

pyobject *me_value;

} pydictentry;

/*

to ensure the lookup algorithm terminates, there must be at least one unused

slot (null key) in the table.

the value ma_fill is the number of non-null keys (sum of active and dummy);

ma_used is the number of non-null, non-dummy keys (== the number of non-null

values == the number of active items).

to avoid slowing down lookups on a near-full table, we resize the table when

it's two-thirds full.

*/

typedef struct _dictobject pydictobject;

struct _dictobject {

pyobject_head

py_ssize_t ma_fill; /* # active + # dummy */

py_ssize_t ma_used; /* # active */

/* the table contains ma_mask + 1 slots, and that's a power of 2.

* we store the mask instead of the size because the mask is more

* frequently needed.

*/

py_ssize_t ma_mask;

/* ma_table points to ma_smalltable for small tables, else to

* additional malloc'ed memory. ma_table is never null! this rule

* saves repeated runtime null-tests in the workhorse getitem and

* setitem calls.

*/

pydictentry *ma_table;

pydictentry *(*ma_lookup)(pydictobject *mp, pyobject *key, long hash);

pydictentry ma_smalltable[pydict_minsize];

};

pyobject_head 源码:

#ifdef py_trace_refs /* define pointers to support a doubly-linked list of all live heap objects. */ #define _pyobject_head_extra \ struct _object *_ob_next; \ struct _object *_ob_prev; #define _pyobject_extra_init 0, 0, #else #define _pyobject_head_extra #define _pyobject_extra_init #endif /* pyobject_head defines the initial segment of every pyobject. */ #define pyobject_head \ _pyobject_head_extra \ py_ssize_t ob_refcnt; \ struct _typeobject *ob_type;

pyobject_var_head 源码:

/* pyobject_var_head defines the initial segment of all variable-size * container objects. these end with a declaration of an array with 1 * element, but enough space is malloc'ed so that the array actually * has room for ob_size elements. note that ob_size is an element count, * not necessarily a byte count. */ #define pyobject_var_head \ pyobject_head \ py_ssize_t ob_size; /* number of items in variable part */

现在知道了dict为什么比list 占用的内存空间更大。接下来如何让你的类更加的节省内存。

其实有两种解决方案:

第一种是使用__slots__ ;另外一种是使用collection.namedtuple 实现。

首先用标准的方式写一个类:

#!/usr/bin/env python class foobar(object): def __init__(self, x): self.x = x @profile def main(): f = [foobar(42) for i in range(1000000)] if __name__ == "__main__": main()

然后,创建一个类foobar(),然后实例化100w次。通过@profile查看内存使用情况。

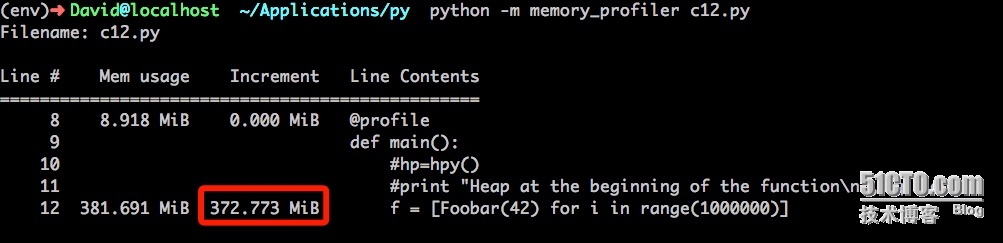

运行结果:

该代码共使用了372m内存。

接下来通过__slots__代码实现该代码:

#!/usr/bin/env python class foobar(object): __slots__ = 'x' def __init__(self, x): self.x = x @profile def main(): f = [foobar(42) for i in range(1000000)] if __name__ == "__main__": main()

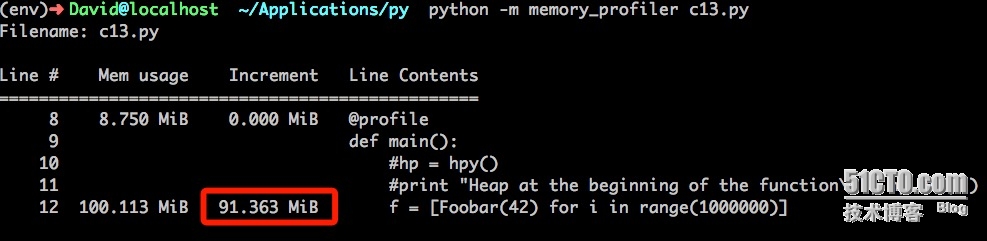

运行结果:

使用__slots__使用了91m内存,比使用__dict__存储属性值节省了4倍。

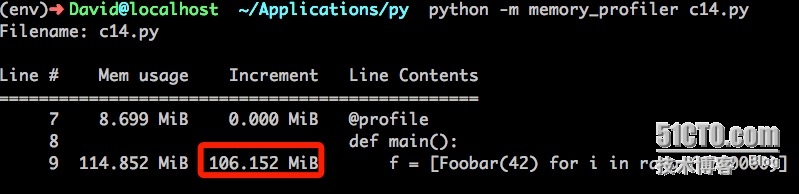

其实使用collection模块的namedtuple也可以实现__slots__相同的功能。namedtuple其实就是继承自tuple,同时也因为__slots__的值被设置成了一个空tuple以避免创建__dict__。

看看collection是如何实现的:

collection 和普通创建类方式相比,也节省了不少的内存。所在在确定类的属性值固定的情况下,可以使用__slots__方式对内存进行优化。但是这项技术不应该被滥用于静态类或者其他类似场合,那不是python程序的精神所在。

总结

以上就是这篇文章的全部内容了,希望本文的内容对大家的学习或者工作具有一定的参考学习价值,如果有疑问大家可以留言交流,谢谢大家对移动技术网的支持。

如对本文有疑问,请在下面进行留言讨论,广大热心网友会与你互动!! 点击进行留言回复

新手学习Python2和Python3中print不同的用法

Python基于os.environ从windows获取环境变量

网友评论