我是湖人中锋,测命格,鬼伎回忆录 下载

redis sentinel是redis的高可用方案。是redis 2.8中正式引入的。

在之前的主从复制方案中,如果主节点出现问题,需要手动将一个从节点升级为主节点,然后将其它从节点指向新的主节点,并且需要修改应用方主节点的地址。整个过程都需要人工干预。

下面通过日志具体看看sentinel的切换流程。

sentinel的切换流程

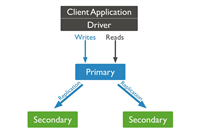

集群拓扑图如下。

角色 ip 端口 runid

主节点 127.0.0.1 6379

从节点-1 127.0.0.1 6380

从节点-2 127.0.0.1 6381

sentinel-1 127.0.0.1 26379 d4424b8684977767be4f5abd1e364153fbb0adbd

sentinel-2 127.0.0.1 26380 18311edfbfb7bf89fe4b67d08ef432053db62fff

sentinel-3 127.0.0.1 26381 3e9eb1aa9378d89cfe04fe21bf4a05a901747fa8

kill -9 将主节点进程杀死。

1. 最先反应的是从节点。

其会马上输出如下信息。

28244:s 08 oct 16:03:34.184 # connection with master lost. 28244:s 08 oct 16:03:34.184 * caching the disconnected master state. 28244:s 08 oct 16:03:34.548 * connecting to master 127.0.0.1:6379 28244:s 08 oct 16:03:34.548 * master <-> slave sync started 28244:s 08 oct 16:03:34.548 # error condition on socket for sync: connection refused 28244:s 08 oct 16:03:35.556 * connecting to master 127.0.0.1:6379 28244:s 08 oct 16:03:35.556 * master <-> slave sync started ...

2. sentinel的日志30s后才有输出,这个与“sentinel down-after-milliseconds mymaster 30000”的设置有关。

下面,依次贴出哨兵各个节点及slave的日志输出。

sentinel-1

28087:x 08 oct 16:04:04.277 # +sdown master mymaster 127.0.0.1 6379 28087:x 08 oct 16:04:04.379 # +new-epoch 1 28087:x 08 oct 16:04:04.385 # +vote-for-leader 18311edfbfb7bf89fe4b67d08ef432053db62fff 1 28087:x 08 oct 16:04:05.388 # +odown master mymaster 127.0.0.1 6379 #quorum 3/2 28087:x 08 oct 16:04:05.388 # next failover delay: i will not start a failover before mon oct 8 16:10:04 2018 28087:x 08 oct 16:04:05.631 # +config-update-from sentinel 18311edfbfb7bf89fe4b67d08ef432053db62fff 127.0.0.1 26380 @ mymaster 127.0.0.1 6379 28087:x 08 oct 16:04:05.631 # +switch-master mymaster 127.0.0.1 6379 127.0.0.1 6381 28087:x 08 oct 16:04:05.631 * +slave slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6381 28087:x 08 oct 16:04:05.631 * +slave slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381 28087:x 08 oct 16:04:35.656 # +sdown slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381

sentinel-2

28163:x 08 oct 16:04:04.289 # +sdown master mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:04.366 # +odown master mymaster 127.0.0.1 6379 #quorum 3/2 28163:x 08 oct 16:04:04.366 # +new-epoch 1 28163:x 08 oct 16:04:04.366 # +try-failover master mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:04.373 # +vote-for-leader 18311edfbfb7bf89fe4b67d08ef432053db62fff 1 28163:x 08 oct 16:04:04.385 # 3e9eb1aa9378d89cfe04fe21bf4a05a901747fa8 voted for 18311edfbfb7bf89fe4b67d08ef432053db62fff 1 28163:x 08 oct 16:04:04.385 # d4424b8684977767be4f5abd1e364153fbb0adbd voted for 18311edfbfb7bf89fe4b67d08ef432053db62fff 1 28163:x 08 oct 16:04:04.450 # +elected-leader master mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:04.450 # +failover-state-select-slave master mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:04.528 # +selected-slave slave 127.0.0.1:6381 127.0.0.1 6381 @ mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:04.528 * +failover-state-send-slaveof-noone slave 127.0.0.1:6381 127.0.0.1 6381 @ mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:04.586 * +failover-state-wait-promotion slave 127.0.0.1:6381 127.0.0.1 6381 @ mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:05.543 # +promoted-slave slave 127.0.0.1:6381 127.0.0.1 6381 @ mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:05.543 # +failover-state-reconf-slaves master mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:05.629 * +slave-reconf-sent slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:06.554 # -odown master mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:06.555 * +slave-reconf-inprog slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:06.555 * +slave-reconf-done slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:06.606 # +failover-end master mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:06.606 # +switch-master mymaster 127.0.0.1 6379 127.0.0.1 6381 28163:x 08 oct 16:04:06.606 * +slave slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6381 28163:x 08 oct 16:04:06.606 * +slave slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381 28163:x 08 oct 16:04:36.687 # +sdown slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381

sentinel-3

28234:x 08 oct 16:04:04.288 # +sdown master mymaster 127.0.0.1 6379 28234:x 08 oct 16:04:04.378 # +new-epoch 1 28234:x 08 oct 16:04:04.385 # +vote-for-leader 18311edfbfb7bf89fe4b67d08ef432053db62fff 1 28234:x 08 oct 16:04:04.385 # +odown master mymaster 127.0.0.1 6379 #quorum 2/2 28234:x 08 oct 16:04:04.385 # next failover delay: i will not start a failover before mon oct 8 16:10:04 2018 28234:x 08 oct 16:04:05.630 # +config-update-from sentinel 18311edfbfb7bf89fe4b67d08ef432053db62fff 127.0.0.1 26380 @ mymaster 127.0.0.1 6379 28234:x 08 oct 16:04:05.630 # +switch-master mymaster 127.0.0.1 6379 127.0.0.1 6381 28234:x 08 oct 16:04:05.630 * +slave slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6381 28234:x 08 oct 16:04:05.630 * +slave slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381 28234:x 08 oct 16:04:35.709 # +sdown slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381

slave2

28244:s 08 oct 16:04:04.762 * master <-> slave sync started 28244:s 08 oct 16:04:04.762 # error condition on socket for sync: connection refused 28244:s 08 oct 16:04:05.630 * slave of 127.0.0.1:6381 enabled (user request from 'id=6 addr=127.0.0.1:43880 fd=12 name= age=148 idle=0 flags=n db=0 sub=0 psub=0 multi=-1 qbuf=224 qbuf-free= 32544 obl=81 oll=0 omem=0 events=r cmd=slaveof')28244:s 08 oct 16:04:05.636 # config rewrite executed with success. 28244:s 08 oct 16:04:05.770 * connecting to master 127.0.0.1:6381 28244:s 08 oct 16:04:05.770 * master <-> slave sync started 28244:s 08 oct 16:04:05.770 * non blocking connect for sync fired the event. 28244:s 08 oct 16:04:05.770 * master replied to ping, replication can continue... 28244:s 08 oct 16:04:05.770 * trying a partial resynchronization (request b95802ca8afd97c578b355a5838d219681d0af27:24302). 28244:s 08 oct 16:04:05.770 * successful partial resynchronization with master. 28244:s 08 oct 16:04:05.770 # master replication id changed to a4022bb5c361353a4773fd460cec5cdcc5c02031 28244:s 08 oct 16:04:05.770 * master <-> slave sync: master accepted a partial resynchronization.

slave3

28253:s 08 oct 16:04:03.655 * master <-> slave sync started 28253:s 08 oct 16:04:03.655 # error condition on socket for sync: connection refused 28253:m 08 oct 16:04:04.586 # setting secondary replication id to b95802ca8afd97c578b355a5838d219681d0af27, valid up to offset: 24302. new replication id is a4022bb5c361353a4773fd460cec5cdc c5c0203128253:m 08 oct 16:04:04.586 * discarding previously cached master state. 28253:m 08 oct 16:04:04.586 * master mode enabled (user request from 'id=9 addr=127.0.0.1:49316 fd=8 name=sentinel-18311edf-cmd age=137 idle=0 flags=x db=0 sub=0 psub=0 multi=3 qbuf=0 qbuf- free=32768 obl=36 oll=0 omem=0 events=r cmd=exec')28253:m 08 oct 16:04:04.593 # config rewrite executed with success. 28253:m 08 oct 16:04:05.770 * slave 127.0.0.1:6380 asks for synchronization 28253:m 08 oct 16:04:05.770 * partial resynchronization request from 127.0.0.1:6380 accepted. sending 156 bytes of backlog starting from offset 24302.

结合上面的日志,可以看到,

各个sentinel节点都判断127.0.0.1 6379为主观下线(subjectively down,缩写为sdown)。

28163:x 08 oct 16:04:04.289 # +sdown master mymaster 127.0.0.1 6379

达到quorum的设置,sentinel-2判断其为客观下线(objectively down,缩写为odown)。结合其它两个sentinel节点的日志,可以看到,sentinel-2最先判定其客观下线。接下来,会进行sentinel的领导者选举。一般来说,谁先完成客观下线的判定,谁就是领导者,只有sentinel领导者才能进行failover。

28163:x 08 oct 16:04:04.366 # +odown master mymaster 127.0.0.1 6379 #quorum 3/2 28163:x 08 oct 16:04:04.366 # +new-epoch 1 28163:x 08 oct 16:04:04.366 # +try-failover master mymaster 127.0.0.1 6379 28163:x 08 oct 16:04:04.373 # +vote-for-leader 18311edfbfb7bf89fe4b67d08ef432053db62fff 1 28163:x 08 oct 16:04:04.385 # 3e9eb1aa9378d89cfe04fe21bf4a05a901747fa8 voted for 18311edfbfb7bf89fe4b67d08ef432053db62fff 1 28163:x 08 oct 16:04:04.385 # d4424b8684977767be4f5abd1e364153fbb0adbd voted for 18311edfbfb7bf89fe4b67d08ef432053db62fff 1 28163:x 08 oct 16:04:04.450 # +elected-leader master mymaster 127.0.0.1 6379

寻找合适的slave作为master

28163:x 08 oct 16:04:04.450 # +failover-state-select-slave master mymaster 127.0.0.1 6379

+failover-state-select-slave <instance details> -- new failover state is select-slave: we are trying to find a suitable slave for promotion.

将127.0.0.1 6381设置为新主

28163:x 08 oct 16:04:04.528 # +selected-slave slave 127.0.0.1:6381 127.0.0.1 6381 @ mymaster 127.0.0.1 6379

+selected-slave <instance details> -- we found the specified good slave to promote.

命令6381节点执行slaveof no one,使其成为主节点

28163:x 08 oct 16:04:04.528 * +failover-state-send-slaveof-noone slave 127.0.0.1:6381 127.0.0.1 6381 @ mymaster 127.0.0.1 6379

+failover-state-send-slaveof-noone <instance details> -- we are trying to reconfigure the promoted slave as master, waiting for it to switch.

等待6381节点升级为主节点

28163:x 08 oct 16:04:04.586 * +failover-state-wait-promotion slave 127.0.0.1:6381 127.0.0.1 6381 @ mymaster 127.0.0.1 6379

确认6381节点已经升级为主节点

28163:x 08 oct 16:04:05.543 # +promoted-slave slave 127.0.0.1:6381 127.0.0.1 6381 @ mymaster 127.0.0.1 6379

再来看看16:04:04.528到16:04:05.543这个时间段slave3的日志输出。可以看到,其开启了master模式,且重写了配置文件。

28253:m 08 oct 16:04:04.586 # setting secondary replication id to b95802ca8afd97c578b355a5838d219681d0af27, valid up to offset: 24302. new replication id is a4022bb5c361353a4773fd460cec5cdcc5c02031

28253:m 08 oct 16:04:04.586 * discarding previously cached master state. 28253:m 08 oct 16:04:04.586 * master mode enabled (user request from 'id=9 addr=127.0.0.1:49316 fd=8 name=sentinel-18311edf-cmd age=137 idle=0 flags=x db=0 sub=0 psub=0 multi=3 qbuf=0 qbuf-free=32768 obl=36 oll=0 omem=0 events=r cmd=exec')

28253:m 08 oct 16:04:04.593 # config rewrite executed with success.

failover进入重新配置从节点阶段

28163:x 08 oct 16:04:05.543 # +failover-state-reconf-slaves master mymaster 127.0.0.1 6379

命令6380节点复制新的主节点

28163:x 08 oct 16:04:05.629 * +slave-reconf-sent slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6379

+slave-reconf-sent <instance details> -- the leader sentinel sent the slaveof command to this instance in order to reconfigure it for the new slave.

看看这个时间点slave2的日志输出,基本吻合。其进行的是增量同步。

28244:s 08 oct 16:04:05.630 * slave of 127.0.0.1:6381 enabled (user request from 'id=6 addr=127.0.0.1:43880 fd=12 name= age=148 idle=0 flags=n db=0 sub=0 psub=0 multi=-1 qbuf=224 qbuf-free=32544 obl=81 oll=0 omem=0 events=r cmd=slaveof')

28244:s 08 oct 16:04:05.636 # config rewrite executed with success. 28244:s 08 oct 16:04:05.770 * connecting to master 127.0.0.1:6381 28244:s 08 oct 16:04:05.770 * master <-> slave sync started 28244:s 08 oct 16:04:05.770 * non blocking connect for sync fired the event. 28244:s 08 oct 16:04:05.770 * master replied to ping, replication can continue... 28244:s 08 oct 16:04:05.770 * trying a partial resynchronization (request b95802ca8afd97c578b355a5838d219681d0af27:24302). 28244:s 08 oct 16:04:05.770 * successful partial resynchronization with master. 28244:s 08 oct 16:04:05.770 # master replication id changed to a4022bb5c361353a4773fd460cec5cdcc5c02031 28244:s 08 oct 16:04:05.770 * master <-> slave sync: master accepted a partial resynchronization.

同时,在这个时间点,sentinel也有日志输出,以sentinel1为例。从日志中,可以看到,在这个时间点它会更改配置信息。

28087:x 08 oct 16:04:05.631 # +config-update-from sentinel 18311edfbfb7bf89fe4b67d08ef432053db62fff 127.0.0.1 26380 @ mymaster 127.0.0.1 6379 28087:x 08 oct 16:04:05.631 # +switch-master mymaster 127.0.0.1 6379 127.0.0.1 6381 28087:x 08 oct 16:04:05.631 * +slave slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6381 28087:x 08 oct 16:04:05.631 * +slave slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381

switch-master <master name> <oldip> <oldport> <newip> <newport> -- the master new ip and address is the specified one after a configuration change. this is the message most external users are interested in.

同步过程尚未完成。

28163:x 08 oct 16:04:06.555 * +slave-reconf-inprog slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6379

+slave-reconf-inprog <instance details> -- the slave being reconfigured showed to be a slave of the new master ip:port pair, but the synchronization process is not yet complete.

主从同步完成。

28163:x 08 oct 16:04:06.555 * +slave-reconf-done slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6379

+slave-reconf-done <instance details> -- the slave is now synchronized with the new master.

failover切换完成。

28163:x 08 oct 16:04:06.606 # +failover-end master mymaster 127.0.0.1 6379

failover成功后,发布主节点的切换消息

28163:x 08 oct 16:04:06.606 # +switch-master mymaster 127.0.0.1 6379 127.0.0.1 6381

关联新主节点的slave信息,需要注意的是,原来的主节点会作为新主节点的slave。

28163:x 08 oct 16:04:06.606 * +slave slave 127.0.0.1:6380 127.0.0.1 6380 @ mymaster 127.0.0.1 6381 28163:x 08 oct 16:04:06.606 * +slave slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381

+slave <instance details> -- a new slave was detected and attached.

过了30s后,判定原来的主节点主观下线。

28163:x 08 oct 16:04:36.687 # +sdown slave 127.0.0.1:6379 127.0.0.1 6379 @ mymaster 127.0.0.1 6381

综合来看,sentinel进行failover的流程如下

1. 每隔1秒,每个sentinel节点会向主节点、从节点、其余sentinel节点发送一条ping命令做一次心跳检测,来确认这些节点当前是否可达。当这些节点超过down-after-milliseconds没有进行有效回复,sentinel节点就会判定该节点为主观下线。

2. 如果被判定为主观下线的节点是主节点,该sentinel节点会通过sentinel is master-down-by-addr命令向其他sentinel节点询问对主节点的判断,当超过<quorum>个数,sentinel节点会判定该节点为客观下线。如果从节点、sentinel节点被判定为主观下线,并不会进行后续的故障切换操作。

3. 对sentinel进行领导者选举,由其来进行后续的故障切换(failover)工作。选举算法基于raft。

4. sentinel领导者节点开始进行故障切换。

5. 选择合适的从节点作为新主节点。

6. sentinel领导者节点对上一步选出来的从节点执行slaveof no one命令让其成为主节点。

7. 向剩余的从节点发送命令,让它们成为新主节点的从节点,复制规则和parallel-syncs参数有关。

8. 将原来的主节点更新为从节点,并将其纳入到sentinel的管理,让其恢复后去复制新的主节点。

sentinel的领导者选举流程。

sentinel的领导者选举基于raft协议。

1. 每个在线的sentinel节点都有资格成为领导者,当它确认主节点主观下线时候,会向其他sentinel节点发送sentinel is-master-down-by-addr命令,要求将自己设置为领导者。

2. 收到命令的sentinel节点,如果没有同意过其他sentinel节点的sentinel is-master-down-by-addr命令,将同意该请求,否则拒绝。

3. 如果该sentinel节点发现自己的票数已经大于等于max(quorum,num(sentinels)/2+1),那么它将成为领导者。

新主节点的选择流程。

1. 删除所有已经处于下线或断线状态的从节点。

2. 删除最近5秒没有回复过领导者sentinel的info命令的从节点。

3. 删除所有与已下线主节点连接断开超过down-after-milliseconds*10毫秒的从节点。

4. 选择优先级最高的从节点。

5. 选择复制偏移量最大的从节点。

6. 选择runid最小的从节点。

三个定时监控任务

1. 每隔10秒,每个sentinel节点会向主节点和从节点发送info命令获取最新的拓扑结构。其作用如下:

1> 通过向主节点执行info命令,获取从节点的信息,这也是为什么sentinel节点不需要显式配置监控从节点。

2> 当有新的从节点加入时可立刻感知出来。

3> 节点不可达或者故障切换后,可通过info命令实时更新节点拓扑信息。

2. 每隔2秒,每个sentinel节点会向redis数据节点的__sentinel__:hello频道上发送该sentinel节点对于主节点的判断以及当前sentinel节点的信息,同时每个sentinel节点也会订阅该频道,来了解其它sentinel节点以及它们对主节点的判断。其作用如下:

1> 发现新的sentinel节点:通过订阅主节点的__sentinel__:hello了解其它sentinel节点信息,如果是新加入的sentinel节点,将该sentinel节点信息保存起来,并与该sentinel节点创建连接。

2> sentinel节点之间交换主节点的状态,作为后面客观下线以及领导者选举的依据。

3. 每隔1秒,每个sentinel节点会向主节点、从节点、其余sentinel节点发送一条ping命令做一次心跳检测,来确认这些节点当前是否可达。这个定时任务是节点失败判定的重要依据。

sentinel的相关参数

# bind 127.0.0.1 192.168.1.1 # protected-mode no port 26379 # sentinel announce-ip <ip> # sentinel announce-port <port> dir /tmp sentinel monitor mymaster 127.0.0.1 6379 2 # sentinel auth-pass <master-name> <password> sentinel down-after-milliseconds mymaster 30000 sentinel parallel-syncs mymaster 1 sentinel failover-timeout mymaster 180000 # sentinel notification-script mymaster /var/redis/notify.sh # sentinel client-reconfig-script mymaster /var/redis/reconfig.sh sentinel deny-scripts-reconfig yes

其中,

dir:设置sentinel的工作目录。

sentinel monitor mymaster 127.0.0.1 6379 2:其中2是quorum,即权重,代表至少需要两个sentinel节点认为主节点主观下线,才可判定主节点为客观下线。一般建议将其设置为sentinel节点的一半加1。不仅如此,quorum还与sentinel节点的领导者选举有关。为了选出sentinel的领导者,至少需要max(quorum, num(sentinels) / 2 + 1)个sentinel节点参与选举。

sentinel down-after-milliseconds mymaster 30000:每个sentinel节点都要通过定期发送ping命令来判断redis节点和其余sentinel节点是否可达。

如果在指定的时间内,没有收到主节点的有效回复,则判断其为主观下线。需要注意的是,该参数不仅用来判断主节点状态,同样也用来判断该主节点下面的从节点及其它sentinel的状态。其默认值为30s。

sentinel parallel-syncs mymaster 1:在failover期间,允许多少个slave同时指向新的主节点。如果numslaves设置较大的话,虽然复制操作并不会阻塞主节点,但多个节点同时指向新的主节点,会增加主节点的网络和磁盘io负载。

sentinel failover-timeout mymaster 180000:定义故障切换超时时间。默认180000,单位秒,即3min。需要注意的是,该时间不是总的故障切换的时间,而是适用于故障切换的多个场景。

# specifies the failover timeout in milliseconds. it is used in many ways: # # - the time needed to re-start a failover after a previous failover was # already tried against the same master by a given sentinel, is two # times the failover timeout. # # - the time needed for a slave replicating to a wrong master according # to a sentinel current configuration, to be forced to replicate # with the right master, is exactly the failover timeout (counting since # the moment a sentinel detected the misconfiguration). # # - the time needed to cancel a failover that is already in progress but # did not produced any configuration change (slaveof no one yet not # acknowledged by the promoted slave). # # - the maximum time a failover in progress waits for all the slaves to be # reconfigured as slaves of the new master. however even after this time # the slaves will be reconfigured by the sentinels anyway, but not with # the exact parallel-syncs progression as specified.

第一种适用场景:如果redis sentinel对一个主节点故障切换失败,那么下次再对该主节点做故障切换的起始时间是failover-timeout的2倍。这点从sentinel的日志就可体现出来(28234:x 08 oct 16:04:04.385 # next failover delay: i will not start a failover before mon oct 8 16:10:04 2018)

sentinel notification-script:定义通知脚本,当sentinel出现warning级别的事件时,会调用该脚本,其会传入两个参数:事件类型,事件描述。

sentinel client-reconfig-script:当主节点发生切换时,会调用该参数定义的脚本,其会传入以下参数:<master-name> <role> <state> <from-ip> <from-port> <to-ip> <to-port>

关于脚本,其必须遵循一定的规则。

# scripts execution # # sentinel notification-script and sentinel reconfig-script are used in order # to configure scripts that are called to notify the system administrator # or to reconfigure clients after a failover. the scripts are executed # with the following rules for error handling: # # if script exits with "1" the execution is retried later (up to a maximum # number of times currently set to 10). # # if script exits with "2" (or an higher value) the script execution is # not retried. # # if script terminates because it receives a signal the behavior is the same # as exit code 1. # # a script has a maximum running time of 60 seconds. after this limit is # reached the script is terminated with a sigkill and the execution retried.

sentinel deny-scripts-reconfig:不允许使用sentinel set设置notification-script和client-reconfig-script。

sentinel的常见操作

sentinel masters

输出被监控的主节点的状态信息

127.0.0.1:26379> sentinel masters

1) 1) "name"

2) "mymaster"

3) "ip"

4) "127.0.0.1"

5) "port"

6) "6379"

7) "runid"

8) "6ab2be5db3a37c10f2473c8fb9daed147a32df3e"

9) "flags"

10) "master"

11) "link-pending-commands"

12) "0"

13) "link-refcount"

14) "1"

15) "last-ping-sent"

16) "0"

17) "last-ok-ping-reply"

18) "639"

19) "last-ping-reply"

20) "639"

21) "down-after-milliseconds"

22) "30000"

23) "info-refresh"

24) "2075"

25) "role-reported"

26) "master"

27) "role-reported-time"

28) "759682"

29) "config-epoch"

30) "0"

31) "num-slaves"

32) "2"

33) "num-other-sentinels"

34) "2"

35) "quorum"

36) "2"

37) "failover-timeout"

38) "180000"

39) "parallel-syncs"

40) "1"

也可单独查看某个主节点的状态

sentinel master mymaster

sentinel slaves mymaster

查看某个主节点slave的状态

127.0.0.1:26379> sentinel slaves mymaster

1) 1) "name"

2) "127.0.0.1:6380"

3) "ip"

4) "127.0.0.1"

5) "port"

6) "6380"

7) "runid"

8) "983b87fd070c7f052b26f5135bbb30fdeb170a54"

9) "flags"

10) "slave"

11) "link-pending-commands"

12) "0"

13) "link-refcount"

14) "1"

15) "last-ping-sent"

16) "0"

17) "last-ok-ping-reply"

18) "178"

19) "last-ping-reply"

20) "178"

21) "down-after-milliseconds"

22) "30000"

23) "info-refresh"

24) "6160"

25) "role-reported"

26) "slave"

27) "role-reported-time"

28) "489019"

29) "master-link-down-time"

30) "0"

31) "master-link-status"

32) "ok"

33) "master-host"

34) "127.0.0.1"

35) "master-port"

36) "6379"

37) "slave-priority"

38) "100"

39) "slave-repl-offset"

40) "70375"

2) 1) "name"

2) "127.0.0.1:6381"

3) "ip"

4) "127.0.0.1"

5) "port"

6) "6381"

7) "runid"

8) "b88059cce9104dd4e0366afd6ad07a163dae8b15"

9) "flags"

10) "slave"

11) "link-pending-commands"

12) "0"

13) "link-refcount"

14) "1"

15) "last-ping-sent"

16) "0"

17) "last-ok-ping-reply"

18) "178"

19) "last-ping-reply"

20) "178"

21) "down-after-milliseconds"

22) "30000"

23) "info-refresh"

24) "2918"

25) "role-reported"

26) "slave"

27) "role-reported-time"

28) "489019"

29) "master-link-down-time"

30) "0"

31) "master-link-status"

32) "ok"

33) "master-host"

34) "127.0.0.1"

35) "master-port"

36) "6379"

37) "slave-priority"

38) "100"

39) "slave-repl-offset"

40) "71040"

sentinel sentinels mymaster

查看其它sentinel的状态

127.0.0.1:26379> sentinel sentinels mymaster

1) 1) "name"

2) "738ccbddaa0d4379d89a147613d9aecfec765bcb"

3) "ip"

4) "127.0.0.1"

5) "port"

6) "26381"

7) "runid"

8) "738ccbddaa0d4379d89a147613d9aecfec765bcb"

9) "flags"

10) "sentinel"

11) "link-pending-commands"

12) "0"

13) "link-refcount"

14) "1"

15) "last-ping-sent"

16) "0"

17) "last-ok-ping-reply"

18) "475"

19) "last-ping-reply"

20) "475"

21) "down-after-milliseconds"

22) "30000"

23) "last-hello-message"

24) "79"

25) "voted-leader"

26) "?"

27) "voted-leader-epoch"

28) "0"

2) 1) "name"

2) "7251bb129ca373ad0d8c7baf3b6577ae2593079f"

3) "ip"

4) "127.0.0.1"

5) "port"

6) "26380"

7) "runid"

8) "7251bb129ca373ad0d8c7baf3b6577ae2593079f"

9) "flags"

10) "sentinel"

11) "link-pending-commands"

12) "0"

13) "link-refcount"

14) "1"

15) "last-ping-sent"

16) "0"

17) "last-ok-ping-reply"

18) "475"

19) "last-ping-reply"

20) "475"

21) "down-after-milliseconds"

22) "30000"

23) "last-hello-message"

24) "985"

25) "voted-leader"

26) "?"

27) "voted-leader-epoch"

28) "0"

sentinel get-master-addr-by-name <master name>

返回指定<master name>主节点的ip地址和端口。如果在进行故障切换,则显示的是新主的信息。

127.0.0.1:26379> sentinel get-master-addr-by-name mymaster 1) "127.0.0.1" 2) "6379"

sentinel reset <pattern>

对符合<pattern>(通配符风格)主节点的配置进行重置。

如果某个slave宕机了,其依然处于sentinel的管理中,所以,在其恢复正常后,其依然会加入到之前的复制环境中,即使配置文件中没有指定slaveof选项。不仅如此,如果主节点宕机了,在其重启后,其默认会作为从节点接入到之前的复制环境中。

但很多时候,我们可能就是想移除old master,slave,这个时候,sentinel reset就派上用场了。其会基于当前主节点的状态,重置其配置(they'll refresh the list of slaves within the next 10 seconds, only adding the ones listed as correctly replicating from the current master info output)。关键的是,对于非正常状态的slave,会从当前的配置中剔除。这样,被剔除节点在恢复正常后(注意此时的配置文件,需剔除slaveof的配置),也不会自动加入到之前的复制环境中。

需要注意的是,该命令仅对当前sentinel节点有效,如果要剔除某个节点,需要在所有的sentinel节点上执行reset操作。

sentinel failover <master name>

对指定 <master name> 主节点进行强制故障切换。相对于常规的故障切换,其无需进行sentinel节点的领导者选举。直接由当前sentinel节点进行后续的故障切换。

sentinel ckquorum <master name>

检测当前可达的sentinel节点总数是否达到<quorum>的个数

127.0.0.1:26379> sentinel ckquorum mymaster ok 3 usable sentinels. quorum and failover authorization can be reached

sentinel flushconfig

将sentinel节点的配置信息强制刷到磁盘上,这个命令sentinel节点自身用得比较多,对于开发和运维人员只有当外部原因(例如磁盘损坏)造成配置文件损坏或者丢失时,才会用上。

sentinel remove <master name>

取消当前sentinel节点对于指定<master name>主节点的监控。

[root@slowtech redis-4.0.11]# grep -ev "^#|^$" sentinel_26379.conf port 26379 dir "/tmp" sentinel myid 2467530fa249dbbc435c50fbb0dc2a4e766146f8 sentinel deny-scripts-reconfig yes sentinel monitor mymaster 127.0.0.1 6381 2 sentinel config-epoch mymaster 12 sentinel leader-epoch mymaster 0 sentinel known-slave mymaster 127.0.0.1 6380 sentinel known-slave mymaster 127.0.0.1 6379 sentinel known-sentinel mymaster 127.0.0.1 26381 738ccbddaa0d4379d89a147613d9aecfec765bcb sentinel known-sentinel mymaster 127.0.0.1 26380 7251bb129ca373ad0d8c7baf3b6577ae2593079f sentinel current-epoch 12 [root@slowtech redis-4.0.11]# redis-cli -p 26379 127.0.0.1:26379> sentinel remove mymaster ok 127.0.0.1:26379> quit [root@slowtech redis-4.0.11]# grep -ev "^#|^$" sentinel_26379.conf port 26379 dir "/tmp" sentinel myid 2467530fa249dbbc435c50fbb0dc2a4e766146f8 sentinel deny-scripts-reconfig yes sentinel current-epoch 12

sentinel set <name> <option> <value>

参数 用法

quorum sentinel set mymaster quorum 3

down-after-milliseconds sentinel set mymaster down-after-milliseconds 30000

failover-timeout sentinel set mymaster failover-timeout 18000

parallel-syncs sentinel set mymaster parallel-syncs 3

notification-script sentinel set mymaster notification-script /tmp/a.sh

client-reconfig-script sentinel set mymaster client-reconfig-script /tmp/b.sh

auth-pass sentinel set mymaster auth-pass masterpassword

需要注意的是:

1. sentinel set命令只对当前sentinel节点有效。

2. sentinel set命令如果执行成功会立即刷新配置文件,这点和redis普通数据节点不同,后者修改完配置后,需要执行config rewrite刷新到配置文件。

3. 建议所有sentinel节点的配置尽可能一致。

4. sentinel不支持config命令。如何要查看参数的设置,可痛过sentinel master命令查看。

参考:

1. 《redis开发与运维》

2. 《redis设计与实现》

3. 《redis 4.x cookbook》

4.

如对本文有疑问,请在下面进行留言讨论,广大热心网友会与你互动!! 点击进行留言回复

理解Redis持久化,RDB持久化和AOF持久化的不同处理方式

Redis 两类持久化方式,快照和全量追加日志的不同处理方式

网友评论