军鸡 电影,曹阜忠,盗亦有道之九龙杯

以前讲过利用phantomjs做爬虫抓网页 是配合选择器做的

利用 beautifulsoup(文档 :)这个python模块,可以很轻松的抓取网页内容

# coding=utf-8

import urllib

from bs4 import beautifulsoup

url ='http://www.baidu.com/s'

values ={'wd':'网球'}

encoded_param = urllib.urlencode(values)

full_url = url +'?'+ encoded_param

response = urllib.urlopen(full_url)

soup =beautifulsoup(response)

alinks = soup.find_all('a')

上面可以抓取百度搜出来结果是网球的记录。

beautifulsoup内置了很多非常有用的方法。

几个比较好用的特性:

构造一个node元素

属性可以使用attr拿到,结果是字典

或者直接tag.class取属性也可。

也可以自由操作属性

tag['class'] = 'verybold'

tag['id'] = 1

tag

# <blockquote class="verybold" id="1">extremely bold</blockquote>

del tag['class']

del tag['id']

tag

# <blockquote>extremely bold</blockquote>

tag['class']

# keyerror: 'class'

print(tag.get('class'))

# none

还可以随便操作,查找dom元素,比如下面的例子

1.构建一份文档

html_doc = """ <html><head><title>the dormouse's story</title></head> <p><b>the dormouse's story</b></p> <p>once upon a time there were three little sisters; and their names were <a href="http://example.com/elsie" id="link1">elsie</a>, <a href="http://example.com/lacie" id="link2">lacie</a> and <a href="http://example.com/tillie" id="link3">tillie</a>; and they lived at the bottom of a well.</p> <p>...</p> """ from bs4 import beautifulsoup soup = beautifulsoup(html_doc)

2.各种搞

soup.head

# <head><title>the dormouse's story</title></head>

soup.title

# <title>the dormouse's story</title>

soup.body.b

# <b>the dormouse's story</b>

soup.a

# <a class="sister" href="http://example.com/elsie" id="link1">elsie</a>

soup.find_all('a')

# [<a class="sister" href="http://example.com/elsie" id="link1">elsie</a>,

# <a class="sister" href="http://example.com/lacie" id="link2">lacie</a>,

# <a class="sister" href="http://example.com/tillie" id="link3">tillie</a>]

head_tag = soup.head

head_tag

# <head><title>the dormouse's story</title></head>

head_tag.contents

[<title>the dormouse's story</title>]

title_tag = head_tag.contents[0]

title_tag

# <title>the dormouse's story</title>

title_tag.contents

# [u'the dormouse's story']

len(soup.contents)

# 1

soup.contents[0].name

# u'html'

text = title_tag.contents[0]

text.contents

for child in title_tag.children:

print(child)

head_tag.contents

# [<title>the dormouse's story</title>]

for child in head_tag.descendants:

print(child)

# <title>the dormouse's story</title>

# the dormouse's story

len(list(soup.children))

# 1

len(list(soup.descendants))

# 25

title_tag.string

# u'the dormouse's story'

如对本文有疑问,请在下面进行留言讨论,广大热心网友会与你互动!! 点击进行留言回复

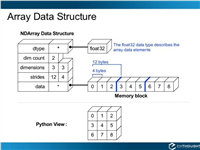

Python 实现将numpy中的nan和inf,nan替换成对应的均值

python爬虫把url链接编码成gbk2312格式过程解析

网友评论