为骗保弑杀双亲,WWW.KFCYOUHUI.COM,数字东城网

import requests

from lxml import etree

from urllib import parse

import os, time

def get_page_html(url):

'''向url发送请求'''

resoponse = session.get(url, headers=headers, timeout=timeout)

try:

if resoponse.status_code == 200:

return resoponse

except exception:

return none

def get_next_url(resoponse):

'''获取下一页的url链接'''

if resoponse:

try:

selector = etree.html(resoponse.text)

url = selector.xpath("//a[@id='j_chapternext']/@href")[0]

next_url = parse.urljoin(resoponse.url, url)

return next_url

except indexerror:

return none

def xs_content(resoponse):

'''获取小说的章节名,内容'''

if resoponse:

selector = etree.html(resoponse.text)

title = selector.xpath("//h3[@class='j_chaptername']/text()")[0]

content_xpath = selector.xpath(

"//div[contains(@class,'read-content') and contains(@class,'j_readcontent')]//p/text()")

return title, content_xpath

def write_to_txt(info_tuple: tuple):

if not info_tuple: return

path = os.path.join(base_path, info_tuple[0])

if not os.path.exists(path):

with open(path + ".txt", "wt", encoding="utf-8") as f:

for line in info_tuple[1]:

f.write(line + "\n")

f.flush()

def run(url):

'''启动'''

html = get_page_html(url)

next_url = get_next_url(html)

info_tupe = xs_content(html)

if next_url and info_tupe:

print("正在写入")

write_to_txt(info_tupe)

time.sleep(sleep_time) # 延迟发送请求的时间,减少对服务器的压力。

print("正在爬取%s" % info_tupe[0])

print("正在爬取%s" % next_url)

run(next_url)

if __name__ == '__main__':

session = requests.session()

sleep_time = 5

timeout = 5

base_path = r"d:\图片\lszj" # 存放文件的目录

url = "https://read.qidian.com/chapter/8iw8dkb_ztxrzk4x-cujuw2/fwjwroiobhn4p8iew--ppw2" # 这是斗破苍穹第一章的url 需要爬取的小说的第一章的链接(url)

headers = {

"referer": "read.qidian.com",

"user-agent": "mozilla/5.0 (windows nt 10.0; wow64) applewebkit/537.36 (khtml, like gecko) chrome/72.0.3626.121 safari/537.36"

}

print('开始运行爬虫')

run(url)

如对本文有疑问,请在下面进行留言讨论,广大热心网友会与你互动!! 点击进行留言回复

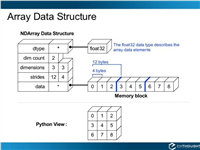

Python 实现将numpy中的nan和inf,nan替换成对应的均值

python爬虫把url链接编码成gbk2312格式过程解析

网友评论